Transition Rate

From Open Risk Manual

Revision as of 18:42, 21 December 2020 by Wiki admin (talk | contribs)

Definition

A Transition Rate is a key property of a multi-state stochastic system (e.g. a Markov Chain). It measures the probability (per unit of time) that an event (state transition) occurs within an infinitesimally small time interval.

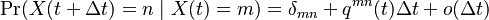

Mathematically, if  is a stochastic process, its transition rates are defined as follows:

is a stochastic process, its transition rates are defined as follows:

- Lets assume a state space with D+1 distinct states:

.

. - The rate of moving from state m to state n in an infinitesimal dime

is

is  , represented as:

, represented as:

Properties

- Since the transition rates are refer to the probabilities of transitions, they must be positive (but need not be less than unity)

- The collection of all transition rates forms a Transition Rate Matrix that satisfies further properties

See Also

Issues and Challenges

The terminology around transition matrix quantities can be confusing as they are used in slightly different contexts:

- When modelling stochastic processes in continuous time, the transition rate is distinct from the Transition Probability which measures transition frequencies over a finite time period

- When estimating transition phenomena the accumulation of statistics is always over a finite period, yet frequently one still uses the term "transition rate"

References